NB: The usual blog disclaimer for this site applies to posts around HoloLens. I am not on the HoloLens team. I have no details on HoloLens other than what is on the public web and so what I post here is just from my own experience experimenting with pieces that are publicly available and you should always check out the official developer site for the product documentation.

Create a new project. I have Unity version 2018.2.13f1. I'm using the latest stable release of the HoloToolkit 2017.4.3.0 Import it into your project. Do the usual tweaks: a. Delete the main Camera. Add the MixedRealityCameraParent.prefab in the Hierarchy. This will serve as the Main Camera. Change the Camera Settings to Hololens. Rebuild the application in Unity and then build and deploy from Visual Studio to experience the app on HoloLens. Bring your hand into view and raise your index finger to get tracked. Start rotating the astronaut with the Navigation gesture (pinch your index finger and thumb together). Move your hand far left, right, up, and down.

I put together a demo project here, you can just import this into a new unity project and get it deployed to your device to take it for a spin. The demo is 4 simple cubes, each with a single interaction attached. From left to right: Drag; Resize without warp; Resize with warp; Rotate. HoloToolkit Unity - Gaze and Airtap and see if we can have our Cube perform some actions just by talking to it. Build in Voice Commands According to Microsoft Support, here are some basic commands you can use totally for free, without having to code a single statement.

I gave a developer session at a meetup the other week which was a bit of a ‘lap around' getting started with HoloLens development which covered a few areas including;

- A 2D ‘Hello World' app and how to deploy, run and debug it on both the HoloLens emulator and HoloLens.

- How to get hold of the HoloToolkit for Unity.

- A 3D ‘Hello World' app in Unity.

- Adding support for Gaze.

- Adding support for Gesture.

- Adding support for Voice.

- Adding Spatial Mapping.

- Adding Spatial Sound.

All of this covers ground very similar to that found in the Holographic Academy and I'd strongly encourage anyone who's even looking at HoloLens to walk through that Academy online as it's a really useful way to get a quick-start on a number of topics and, with the emulator, you don't need to have a device to work through it.

At my session, I used a number of recorded ‘coding' videos which I added a voice-over to 'live' on the day but I was asked whether I might share them here with a voice-over added and so that's the purpose of this post.

The videos follow in order in that that, generally, a later video might make use of something that happened in an earlier video.

In the live session, I have things to say both before, during and after these demos but for this post I'll just make some text notes which hopefully will be enough string the videos together.

Two other notes about these videos;

- They have been recorded from the screen and so they suffer a little in quality.

- They are inevitably going to get out of date with respect to the HoloToolkit and I don't intend to update them which is another reason to always refer back to the official tutorials

With those caveats in place…

Building a Quick 2D UWP App and Deploying to PC, HoloLens Emulator and HoloLens

In this first demo, we put together a UWP application from scratch and then see how it runs on PC and HoloLens and how we can deploy to HoloLens from Visual Studio either over USB or over WiFi; Western digital disk repair tools.

Getting Hold of the HoloToolkit

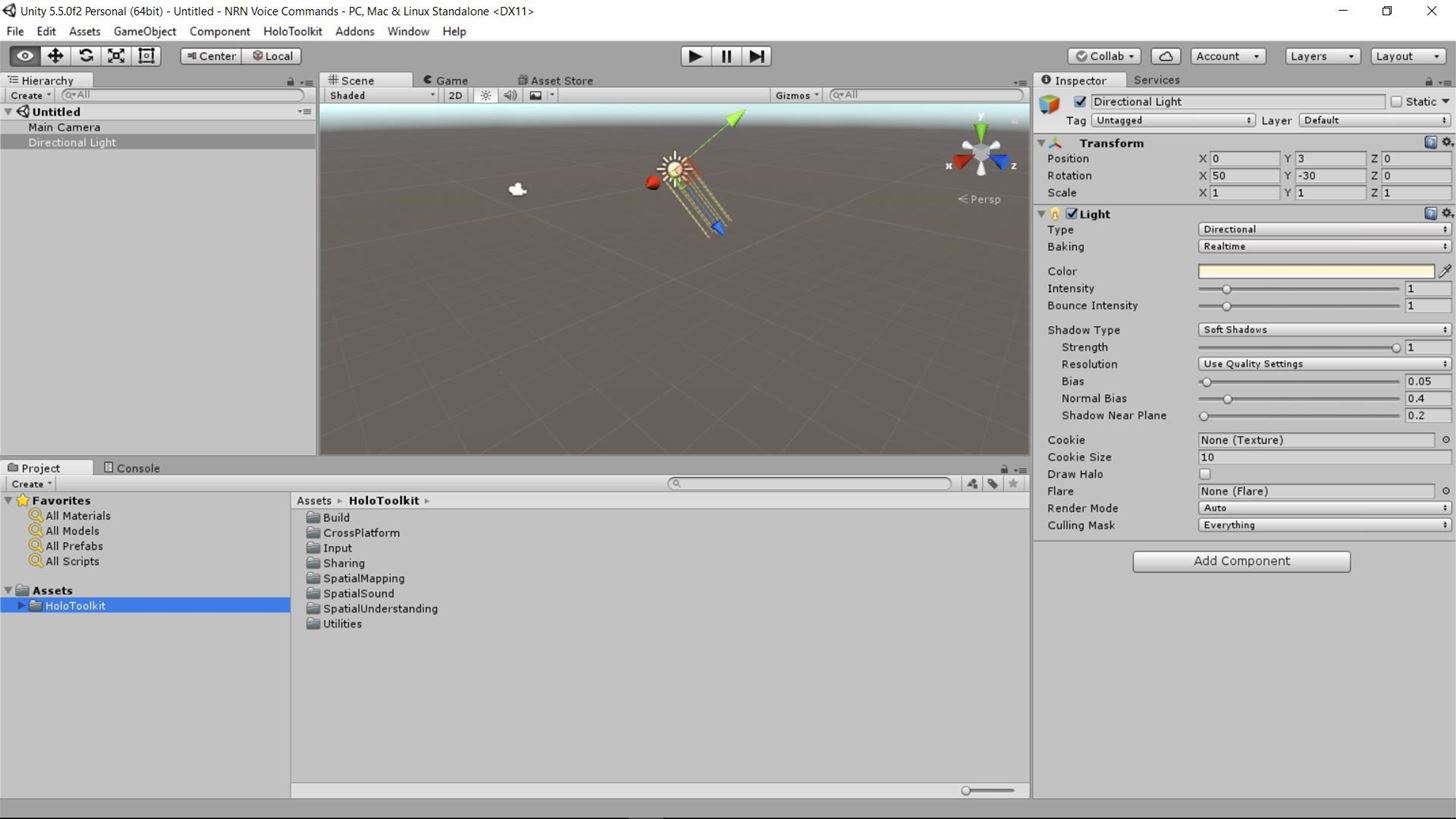

We are going to use the HoloToolkit for Unity in our 3D demos so this very short screen capture just shows how I got hold of it;

A 3D Hello World in Unity

Having got the HoloToolkit for Unity, we can now bring it into a blank project, set up our project and scene settings and make a basic app that we can deploy to the HoloLens to try out;

The Device is the Camera

I thought it was important to try and explain that the world co-ordinate system is presented with the device/user at the centre of it and that the user/device effectively act as the camera here;

Adding Gaze

Knowing where the user is looking and what they are looking at is really important for an app on HoloLens and so we add the basics of detecting the user's gaze;

and then we can make this easier by adding in pieces from the HoloToolkit;

and we can also add a cursor to give the user visual feedback again using pieces from the HoloToolkit;

Adding Gesture

Time for the user to interact with the holograms and here we use the basic framework pieces to add support for a click (air-tap) gesture;

Holotoolkit Unity Package Download

and once again we then revisit that to use the HoloToolkit to make it easier and better for us;

Adding Voice

Voice is a hugely important part of interacting with these applications and so we add support for our application to listen to voice commands via the HoloToolkit;

and then we add basic support for having our application speak back to us, again leaning on the HoloToolkit to get it done;

Adding Spatial Mapping

At the moment, our objects are simply falling through the physical world so it's time to add some spatial mapping support such that the physical environment mixes with the virtual one. For this demo, we simply use the prefab object from the HoloToolkit to add in spatial mapping and to visualise it;

Adding Spatial Sound

Last but not least, sound is critical in an environment where you might want to attract the user's attention to holograms which might be behind them or off to the left or right and so we set up our four cubes as sound sources and see if we can apply spatial sound to them. Note that here I'm just using a basic WAV file so I'm not sure it's going to give stunning results and especially not when recorded this way.

and that's it in terms of the demos that I used in this particular session – hope this is helpful to the people who came along and perhaps a few more folks as well

Controller and hand input

Setup and configuration

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Type | Specific events for buttons, with input type info when relevant. | Action / Gesture based input, passed along via events. |

| Setup | Place the InputManager in the scene. | Enable the input system in the Configuration Profile and specify a concrete input system type. |

| Configuration | Configured in the Inspector, on each individual script in the scene. | Configured via the Mixed Reality Input System Profile and its related profile, listed below. |

Related profiles:

- Mixed Reality Controller Mapping Profile

- Mixed Reality Controller Visualization Profile

- Mixed Reality Gestures Profile

- Mixed Reality Input Actions Profile

- Mixed Reality Input Action Rules Profile

- Mixed Reality Pointer Profile

Gaze Provider settings are modified on the Main Camera object in the scene.

Platform support components (e.g., Windows Mixed Reality Device Manager) must be added to their corresponding service's data providers.

Interface and event mappings

Some events no longer have unique events and now contain a MixedRealityInputAction. These actions are specified in the Input Actions profile and mapped to specific controllers and platforms in the Controller Mapping profile. Events like OnInputDown should now check the MixedRealityInputAction type.

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Type | Specific events for buttons, with input type info when relevant. | Action / Gesture based input, passed along via events. |

| Setup | Place the InputManager in the scene. | Enable the input system in the Configuration Profile and specify a concrete input system type. |

| Configuration | Configured in the Inspector, on each individual script in the scene. | Configured via the Mixed Reality Input System Profile and its related profile, listed below. |

Related profiles:

- Mixed Reality Controller Mapping Profile

- Mixed Reality Controller Visualization Profile

- Mixed Reality Gestures Profile

- Mixed Reality Input Actions Profile

- Mixed Reality Input Action Rules Profile

- Mixed Reality Pointer Profile

Gaze Provider settings are modified on the Main Camera object in the scene.

Platform support components (e.g., Windows Mixed Reality Device Manager) must be added to their corresponding service's data providers.

Interface and event mappings

Some events no longer have unique events and now contain a MixedRealityInputAction. These actions are specified in the Input Actions profile and mapped to specific controllers and platforms in the Controller Mapping profile. Events like OnInputDown should now check the MixedRealityInputAction type.

Related input systems:

| HTK 2017 | MRTK v2 | Action Mapping |

|---|---|---|

IControllerInputHandler | IMixedRealityInputHandler | Mapped to the touchpad or thumbstick |

IControllerTouchpadHandler | IMixedRealityInputHandler | Mapped to the touchpad |

IFocusable | IMixedRealityFocusHandler | |

IGamePadHandler | IMixedRealitySourceStateHandler | |

IHoldHandler | IMixedRealityGestureHandler | Mapped to hold in the Gestures Profile |

IInputClickHandler | IMixedRealityPointerHandler | |

IInputHandler | IMixedRealityInputHandler | Mapped to the controller's buttons or hand tap |

IManipulationHandler | IMixedRealityGestureHandler | Mapped to manipulation in the Gestures Profile |

INavigationHandler | IMixedRealityGestureHandler | Mapped to navigation in the Gestures Profile |

IPointerSpecificFocusable | IMixedRealityFocusChangedHandler | |

ISelectHandler | IMixedRealityInputHandler | Mapped to trigger position |

ISourcePositionHandler | IMixedRealityInputHandler or IMixedRealityInputHandler | Mapped to pointer position or grip position |

ISourceRotationHandler | IMixedRealityInputHandler or IMixedRealityInputHandler | Mapped to pointer position or grip position |

ISourceStateHandler | IMixedRealitySourceStateHandler | |

IXboxControllerHandler | IMixedRealityInputHandler and IMixedRealityInputHandler | Mapped to the various controller buttons and thumbsticks |

Holotoolkit For Unity

Camera

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Delete MainCamera, add MixedRealityCameraParent / MixedRealityCamera / HoloLensCamera prefab to scene or use Mixed Reality Toolkit > Configure > Apply Mixed Reality Scene Settings menu item. | MainCamera parented under MixedRealityPlayspace via Mixed Reality Toolkit > Add to Scene and Configure.. |

| Configuration | Camera settings configuration performed on prefab instance. | Camera settings configured in the Mixed Reality Camera Profile. |

Speech

Using Holotoolkit.unity

Keyword recognition

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Add a SpeechInputSource to your scene. | Keyword service (e.g., Windows Speech Input Manager) must be added to the input system's data providers. |

| Configuration | Recognized keywords are configured in the SpeechInputSource's inspector. | Keywords are configured in the Mixed Reality Speech Commands Profile. |

| Event handlers | ISpeechHandler | IMixedRealitySpeechHandler |

Dictation

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Add a DictationInputManager to your scene. | Dictation support requires service (e.g., Windows Dictation Input Manager) to be added to the Input System's data providers. |

| Event handlers | IDictationHandler | IMixedRealityDictationHandlerIMixedRealitySpeechHandler |

Spatial awareness / mapping

Mesh

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Add the SpatialMapping prefab to the scene. | Enable the Spatial Awareness System in the Configuration Profile and add a spatial observer (e.g., Windows Mixed Reality Spatial Mesh Observer) to the Spatial Awareness System's data providers. |

| Configuration | Configure the scene instance in the inspector. | Configure the settings on each spatial observer's profile. |

Planes

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Use the SurfaceMeshesToPlanes script. | Not yet implemented. |

Spatial understanding

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Add the SpatialUnderstanding prefab to the scene. | Not yet implemented. |

| Configuration | Configure the scene instance in the inspector. | Not yet implemented. |

Boundary

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Add the BoundaryManager script to the scene. | Enable the Boundary System in the Configuration Profile. |

| Configuration | Configure the scene instance in the inspector. | Configure the settings in the Boundary Visualization profile. |

Sharing

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Setup | Sharing service: Add Sharing prefab to the scene. UNet: Use SharingWithUNET example. | In-progress |

| Configuration | Configure the scene instances in the inspector. | In-progress |

UX

| HTK 2017 | MRTK v2 | |

|---|---|---|

| Button | Interactable Objects | Button |

| Interactable | Interactable Objects | Interactable |

| Bounding Box | Bounding Box | Bounding Box |

| App Bar | App Bar | App Bar |

| One Hand Manipulation (Grb and Move) | HandDraggable | Manipulation Handler |

| Two Hand Manipulation (Grab/Move/Rotate/Scale) | TwoHandManipulatable | Manipulation Handler |

| Keyboard | Keyboard prefab | System Keyboard |

| Tooltip | Tooltip | Tooltip |

| Object Collection | Object Collection | Object Collection |

| Solver | Solver | Solver |

Utilities

Some Utilities have been reconciled as duplicates with the Solver system. Please file an issue if any of the scripts you need are missing.

| HTK 2017 | MRTK v2 |

|---|---|

| Billboard | Billboard |

| Tagalong | RadialView or OrbitalSolver |

| FixedAngularSize | |

| FpsDisplay | Diagnostics System (in Configuration Profile) |

| NearFade | Built-in to Mixed Reality Toolkit Standard shader |